Author Archives: Joe

Home security – installing a new system with alarm.com (Part 1)

Back in 2007 I wanted to get a home security system that was more than just the standard type of system. I stumbled upon a do it yourself system from a company who at the time was called InGrid, later renamed to LifeShield. It had everything I wanted – keyfobs, mobile app for my phone, nice web interface, modular and redundant, etc. I had that system until yesterday (July 10th, 2013).

I wasn’t actively looking to replace my home security system, but after talking with a friend who happens to work for alarm.com, I was convinced that it was time for an upgrade. Several of the components on the old system were starting to wear out and fail and I was growing tired of clearing problems at the panel.

Hello GE Simon XTi by Interlogix and powered by alarm.com through SafeMart!

When I received the GE Simon XTi system it came with 3 door/window sensors (the large model), one keyfob, one motion sensor and one CDMA cellular module. This wasn’t enough to cover all the entry points into my home, so I ordered a few more components through eBay. I also decided to pull out the CDMA cellular module since quite honestly CDMA has terrible coverage in my particular area. What I ended up getting is a GSM cellular module with service through at&t which does have excellent coverage especially since I live less than half a mile from one of their towers.

Most of the parts arrived yesterday and once I was home from work – I began the install and setup process. This blog post will cover the setup and installation of the basic home security system and its components. The second part which I will post later on – will cover my experience setting up zwave devices for home automation and control.

So let’s get to it, first off – the GE Simon system came in a very nicely designed box with all the components packed neatly and safely inside. Packaging sometimes gets overlooked but it’s actually very important for a number of reasons such as safety, marketing, protection from damage during shipping, etc.

The next step was to unbox everything and begin setting up the control panel and connecting the wireless sensors to the system.

Before I could do that I had to set up the panel and get it ready to power on. This entails installing the battery pack, which is simple and nothing special. The system does not come with a standard power cord, you have an AC power pack which you then manually connect the power cable to the leads on the AC pack, then manually connect the other end of the power cable to the leads on the back of the panel. Since its AC power there is no polarity so it doesn’t matter which wire goes where on the panel. Each input connection is labeled “AC Input”.

I also installed the cellular module into the optional bay on the back of the Simon XTi. It simply snaps into place and you can secure it with a screw which is provided in the box. It comes with an onboard antenna but you also get an external antenna extension. I want the best possible signal on my system so I installed the external antenna wire. You just snap it onto the antenna connector located on the cellular module and run it out of the open slots on the back of the panel casing. While doing that I also connected my phone line (although not necessary at all). I noticed the panel also has an ethernet port, but I didn’t bother with that either.

Each standard sensor comes in a white box labeled with the GE part numbers and other information. I wanted to approach this install as someone who had no idea what to do or how to proceed. I was somewhat disappointed that the instructions for each sensor did not mention anything about the specifics of adding the sensor to the XTi panel.

You get a little bit of instruction on how to put the system into learning mode but that’s about it. It would be nice if the manufacturer included information about the sensor, such as which sensor group to add it to, product codes, etc. I was genuinely confused at first when adding sensors since the XTi panel asks you for an optional product code, which you cannot find anywhere on the sensor or its documentation.

A bit of advice: be ready to add sensors and remove them only to re-add later. When setting up my system I actually worked on it over the course of two days and I wasn’t ready for activation right away. I had time to tinker with the sensors and ended up removing and re-adding them perhaps 3 times before I was finally satisfied with the configuration. Part of what I didn’t realize at first is that you can edit the sensor name on the panel when you add each sensor, and also add extra descriptions that get appended to the main description. The default for many of the sensors is “Front Door”. Obviously you should change that to something more descriptive.

The keyfobs for example, were the easiest to program into the system because you simply hold the arm/disarm buttons down at the same time to pair it with the panel, but I had 3 of them and had to do a little internet research to find out what group I should put them in and how to name them so I could show who armed/disarmed the system (since 3 different people will now have a keyfob).

What I did was to pair each Keyfob and let the primary description be “keyfob”. Under that I added another description line with just one letter, the first initial of each person’s name who would own one of these keyfobs. So mine for Example was named “keyfob” then under that, the letter “J”. From there you can login to the Alarm.com web interface and rename the sensor with a more usable or familiar name for the person who will use it. These friendly names are what you see in alerts on the website and on the mobile app as well as in email notifications. It would be nice if the panel let you type in your own description, but instead you have to select from a predefined list of labels.

One minor gripe about the keyfobs. On the old system the keyfobs had a separate button for arming in stay or away modes, but the new keyfobs with the GE system have one arm button and the number of times you press the arm button determines how the system is armed. This will take some getting used to and seems less than ideal to me. But with limited space on the keyfob I can understand this design. One press to arm in stay mode, two consecutive presses to arm in away mode.

Next I installed the motion sensor and smoke detector. These were very straightforward and also easy to program. I did have a few minutes of confusion over the smoke detector however, after I paired it with the panel I started getting error messages complaining about the serial number already existing. It turned out that the system was still in learn mode after I had paired the sensor with the panel, but I was still tinkering with the smoke detector which triggered the pairing process again.

Another Tip: when pairing the smoke detector, close out of the learning mode on the panel so that you don’t end up getting confused and troubleshooting a problem that doesn’t exist. I also had to determine the proper group for adding the smoke detector. I don’t recall seeing any documentation with the system that gives you a table of which groups are for which types of sensor. I had to find this information on a YouTube video that SafeMart provided. It would be nice if this information were either included with the system or included on the documentation that comes with each sensor.

My only gripe about the motion sensor is that it is not labeled up or down to help you determine which way to mount it. I have not yet invested the time to research this yet, so it’s very possible that I have mine incorrectly mounted.

I am using 3 of the larger crystal door sensors, but I also ordered and received several of the micro door/window sensors. I highly recommend the micro sensors over the larger ones. The smaller sensors comes with self-adhesive pads making them easier to install in some spots, but the larger sensors do not come with any adhesive pads at all, and seem to be intended for screw mounts. I had to get creative in how I mounted several of my sensors. A minor gripe about the door/window sensors, both have to be pried open to pair them with the panel. This is something I needed a small screw driver for, it’s just a one time thing and not a big deal, but it does make initial pairing more difficult as the plastic housing on the sensors is not that easy to get open.

Another unexpected issue I ran into was when I tried to remove the old sensors from the previous security system. They were installed using very strong self-adhesive pads and when I was removing them, they did come off, but they took chunks of drywall with them. I now have 3 windows in my house with a big brown spot where the old sensors used to be mounted. The adhesive is very strong and if you aren’t extremely careful you can damage the surface you are applying them to if you ever need to remove them. At least with screws you only end up with a small hole or two, which is better than a large missing patch of drywall and paint.

I still need to add just a few more sensors to cover all of my windows and doors but at this point I have the basic home security system installed and functioning. I tested the sensors to make sure that I had everything setup correctly. I did the pairing one sensor at a time and then placed it where it belonged so that I didn’t get anything mixed up.

Once all the hardware was in place it was time to activate. That’s where SafeMart and alarm.com come in. I worked with a number of people from SafeMart to get my system activated, it was actually surprising to me that so many people were involved in the activation process. First there was “Jared” who took my basic info and payment information for the monitoring plan I selected. Then there was “Michelle” who was in the monitoring department and she walked me through creating my SafeMart customer portal account. She also scheduled a conversation with an installer “Greg” who called me back an hour and a half later to walk me through the actual/technical activation process.

Once I was on the phone with Greg, it was mostly a time of waiting for him to create my alarm.com account and register my cellular module in their system. Another Tip: you will need to reboot the XTi panel after they activate the cellular module, apparently the module registers itself at boot up. The reboot process includes disconnecting the battery, and AC power. We waited 1 minute and then put the battery back in and re-connected AC power. Once done, the cellular module registered successfully and tested out okay.

At this point, my system is in a 72 hour test phase where I can play around with the configuration and test it out thoroughly. I want to make sure that everything is securely installed and functioning properly so that I don’t cause any false alarms once the monitoring exits the test phase. Right now two of my sensors have an “N/A” status at the panel which I’ll need to investigate, but otherwise everything seems to be working well.

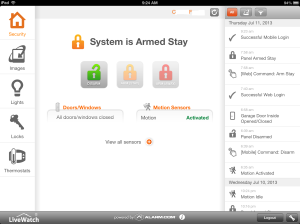

Once my alarm.com account was setup and I had completed the basic configuration setup online – it was time to play with the mobile apps for our iPhones. I installed the alarm.com app on two iPhones and created a separate login account for my wife to use so that we could each have separate geo-location triggers. The app itself is a clean modern look with black and orange highlights (on the iPhone). It works well and I am happy with the functionality it provides. I have only two minor gripes about the mobile app for iPhone/iPad.

1. There is branding at the bottom that says “powered by alarm.com”. I would personally prefer if this was part of the header logo rather than what appears more like an AD placed at the bottom of the app. I find it distracting and it reminds me of ad banners that make me want to look for the paid version of the app without the branding.

2. The app works in a “pull” mode, where it’s not necessarily real-time information on your screen. Some of the screens have a refresh button or a swipe down to refresh. Nothing wrong with that, but it would be more economical as a user if the info were refreshed real-time when the app was open. Again nothing serious, just minor critiques.

I’m sure the alarm.com app will continue to improve and the user interface will be refined as time goes on. Just before I cancelled service with my old home security system they had recently released a new version of their mobile app “LifeShield” which has a very nice UI and real time updates. I’m sure alarm.com could do something similar and given time I suspect that their app will also continue to improve. In all fairness it was many years before LifeShield updated its mobile app. (note: I like the iPad version of the app better than the iPhone version).

The last thing I want to talk about is the geo-location feature. This is something that I am geeking out about. Thanks to alarm.com supporting multiple logins for their service, you can run the mobile app on separate mobile devices and each can be independently monitored and used with the ge-location function.

So far I have created two geo-zones, one for home and one for the area where I work during the day. The idea being that I can have the system notify my wife if she leaves the vicinity of our house without arming the system. And when I leave work for the day I can have the system perform other functional automatically for me, such as set the thermostat to my desired comfort level so the house is nice and cool when I get home. These are just a few of the potential options available with this system. Once I get more devices to connect via zwave I will be able to do some really cool stuff like door lock automation, lighting control, etc. But that is what I’ll write about in part 2 of this post.

The only outstanding issue I need to work on so far is that I can’t seem to get the unit to chime properly when a door or window is opened. I’m sure this is another documentation issue. Most likely I need to move the sensors to a different group in order to get the chime. Right now everything is just silent, which isn’t bad, but with kids in the house its nice to hear when a door or window is opened.

To sum up, the system is well made, sophisticated but still very manageable for a do-it-yourselfer like me. Anyone who knows how to use a search engine for questions they have – could install this system. Although it would be nice if the documentation that comes with the system was better. Hopefully the tips I’ve shared here will at least help someone else as they go through this same exercise.

I’ll update this post over the next few weeks as I learn more about the system and any quirks I observe. And keep an eye out for Part 2 of this post where I’ll share my experiences with zwave home automation – coming soon.

Links:

Safe Mart – http://www.safemart.com

Alarm.com – http://www.alarm.com

Interlogix Simon XTi – http://www.interlogix.com/intrusion/brand/simon-xti/

Simon XTi interactive Demo – http://interlogix.com/simonxti_demo/

Gotcha when adding Exchange transport rule disclaimers

Recently I was involved in a project to test outgoing e-mail disclaimers for only a specific group of users in our company. Normally this would be a no-brainer using the standard features in Exchange transport rules to add a disclaimer using specific criteria. However, while testing the disclaimers with a colleague, he observed that his tests worked fine when sent from a mailbox on Exchange 2007, but failed to work at all when coming from a mailbox on Exchange 2010.

So I began troubleshooting this issue and trying to find the cause of the problem. In our company we actually have 3 generations of Microsoft Exchange running in a co-existence scenario (2003, 2007 and 2010 – with 2013 coming soon). I tried everything I could think of to get the transport rule disclaimer to work, testing it on my own mailbox which is hosted on an Exchange 2010 server. Sure enough the disclaimers did not work for my account.

I poured over KB articles and forum posts scouring the internet for any tips that might at least point me in the direction. After several hours of searching I stumbled upon a forum post indicating that I should check the “remote domains” properties in the Exchange shell. So I ran the command “get-remotedomains | FL” and sure enough the “isInternal” value was set to “true”. Given that our transport rule disclaimers were conditional upon being sent to recipients who were “external” to our Exchange organization – of course none of the rules would work.

In order to resolve the issue, I ran the following command: “get-remotedomain | set-remotedomain -isinternal $false”

This allowed Exchange 2010 hub transport servers to recognize all email recipient domains not configured in our Exchange organization as “external”. A second round of testing revealed that this change did in fact resolve the issue and the transport rule disclaimers worked perfectly for everyone, both Exchange 2007 and 2010 mailboxes.

I am amused and slightly annoyed that the vast majority of forum posts and KB articles I found about how to use Exchange transport rules to send outbound disclaimers has no mention of this possible “gotcha”. I’m sure there are limited circumstances that would result in this issue which is probably why it was not mentioned in the articles I was reading, but I offer this as help to those who may face a similar situation.

Confusing behavior in Exchange 2007/2010 when changing Hub Transport DNS configuration

I recently ran into a configuration issue in Exchange 2007/2010 that left me scratching my head in bewilderment. After noticing some DNS resolution issues between several hub transport servers I began looking at individually and manually configuring DNS resolution settings on specific Exchange servers. In ESM I went to “Server Configuration > Hub Transport” and viewed the properties of each server. On the “Internal DNS lookups” and “External DNS lookups” tabs you get the option to choose either the DNS configuration from a specific network adapter or manually enter the DNS servers to use.

To save time, I began using the drop down selection of specific network adapters to grab the DNS servers I wanted. However, there is an important “gotcha” here that is not indicated in the GUI or anywhere else that I’ve seen. Basically what is happening is that when you use the drop down NIC selections to grab DNS, what you are doing is querying the NICs of the server on which you are running ESM. So what happened in my case is that incorrect DNS servers were being assigned to remote Exchange servers because I assumed that the NICs being listed in the drop down were the NICs physically installed on the remote server for which I was viewing the properties. Big mistake!

I started noticing events in the logs complaining about inability to resolve DNS hosts. Once I started investigating this it dawned on me that the names of the NICs in the drop down listing never changed as I viewed the properties of different servers. It just happened that the NIC I chose did not have any DNS servers configured, so I was basically configuring those specific Exchange servers with no DNS at all on the hub transport DNS resolution configuration.

To resolve the issue, I manually specified the DNS servers to use for each specific Exchange server. That completely resolved the problem and got mail flowing again.

Just kind of curious why Microsoft would allow you to manage remote servers from one location/instance of ESM but only show you the NICs on the local server when configuring remote server hub transport properties.

De-evolving technologically

A lot has happened since I last posted, but I thought it would be good to post a quick update on the changes I’ve made technologically over the last few months.

The first major change is the decommissioning of my personal Exchange mail system. My home server setup was getting far too complex and expensive to maintain so it was decided to do some downsizing and basically get rid of a bunch of servers. At the time I began to remove servers, there was 6 being used full time. It got to a point where I had built a powerful white-box server on which to run VMWare ESXi 5 which allowed me to virtualize all of my servers. There were 2 for AD/DNS/DHCP and 2 for Exchange 2010 in a DAG and 1 for simple/general tasks like backups and game servers.

To be honest, my wife and I got quite accustomed to having enterprise level features in our home e-mail solution. As someone with about 12 years of Exchange experience I had it setup right which included custom domain names, SSL certificate, networking to support ActiveSync for our iPhones, etc. I had wanted to simplify things for quite a while, but never could find just the right solution to handle our e-mail and calendaring in a way that would remain highly functional and not be difficult to migrate to. Then along came a new service by Microsoft called Outlook.com. Long story short, outlook.com is everything we needed to get rid of Exchange and simplify the home setup.

Our email, calendars, contacts, etc – were all easily migrated to Outlook.com. Of course I had to dump about 8GB of archived messages to PST files out of Exchange, but thats a normal part of the process. For the time being, we’re still using Microsoft Outlook to grab old messages, but for all new messaging features we’re going with Windows Live Mail. It integrates nicely with Outlook.com and gives us a very nice user experience with pretty much all of the features that we were accustomed to while using Exchange.

Unfortunately, the networking side of things hasn’t transitioned quite so easily. When we had all of these servers running they were responsible for most of our networking services, like DNS/DHCP. Transitioning to router based network services was a bit of a challenge. I ended up buying an Asus RT-N66U dual band 802.11N Wi-Fi router. The Verizon FIOS router is forwarding all traffic to the Asus router (DMZ). In the Asus router I’m doing all my port forwarding rather than having the Verizon router handle of that. Mainly because the ASUS router won’t allow many of its advanced features if its configured as a mere AP, it must be in router mode for the bells and whistles.

An unfortunate side-effect of this transition is that all of our Apple Devices appear to be rather slow on Wi-Fi using the new home network setup. I’ve run lots of diagnostics and tried many tweaks but nothing I’ve done so far has restored pre-transition device speed for the iDevices. Strangely enough my laptop works fairly well on both the 2.4GHz and 5GHz Wi-Fi Networks. I suspect there is some advanced tweaking that could be done to improve the iDevice performance over Wi-Fi, but so far I haven’t figured it out.

At this point, my office has one nice PC in it, a PC that is quiet and easy to maintain. The big server is now going to go up for sale, hopefully on Craigslist. After adding everything up, it seems I have approximately $1,200 worth of server/parts available for sale. This was a great machine, dual quad core Opteron 2.2GHz CPUs, 32GB DDR2 memory, 6 SATA HDD for over 5 TB of disk space, etc. This machine was perfect for virtualizing servers, the only downside is the noise and heat.

To sum up, I’m no longer running a bunch of servers at home. No more Exchange for home messaging, no more noise, no more extra heat in the office, its all gone. And its interesting how quickly we grew accustomed to the noise in the office and how much we enjoy the quiet now that its gone.

In addition to the servers being removed, I also finally got us off the Ooma VOIP service. Someone purchased the Ooma box from me via craigslist and took it off my hands. Since we’ve bundled phone services with Verizon FIOS there was simply no further need for an additional home phone service.

Outlook.com is handling our messaging needs very nicely. The web interface is easy to use and very clean. It supports Active Sync via the Hotmail connector in the Apple Mail client, which is very nice. The only things we really miss are server based Distribution lists and shared calendars. However, none of that was a deal-breaker for us.

As a result of the downsizing, it looks like we will be saving approximately $600 a year in services and fees from all of the various resources I needed in order to run such a system at home. That includes Microsoft licensing and domain names, SSL, etc. Needless to say this transition is going to be a cost saver with only a few minor networking kinks to work out.

Drive failure in my personal Exchange 2010 server

A few months ago I upgraded my home Exchange mail system from Exchange 2007 to Exchange 2010. During this process I added a secondary server that was identical to the main server. By having two Exchange 2010 servers I was able to utilize the DAG feature that is new in this version of Exchange.

All that to say – yesterday my main Exchange server disk drive failed. I got home from work and shutdown the server, removed the hard drive and tried to run some tests. All I got was clicking, it won’t even see the partition table. Fortunately, since I was running Exchange 2010 with a DAG for redundancy, I was able to quickly activate the database copies on the secondary server thereby eliminating any downtime or data loss of my Exchange system. Currently, the secondary server is actively hosting my databases. My mobile phone still works through ActiveSync, OWA works, incoming and outgoing mail also, no problems!

I’ve purchased a replacement drive which should be here in a day or two. The downside is the drive that failed has failed to a degree where recovering the drive is highly unlikely. Since I don’t have RAID on any of my servers (due to cost) I will end up having to rebuild this server. My next steps will include reinstalling the Operating system and Exchange 2010. I plan on using a recovery mode install of Exchange 2010 which should restore all my Exchange settings and configuration leaving very little left to do but tweak a few things and make sure my DAG is re-created. My only other option is to manually remove all traces of the failed server from Active Directory and then re-install Exchange in normal mode. This would be a little more work so I’m hoping the recovery mode install will work properly.

I LOVE EXCHANGE 2010!

Getting Data Protection Manager agent to work on remote servers

I am now using Microsoft Data Protection Manager 2010 to handle backups of my personal Exchange mail server. It took a while to figure out how to set this up since I had never used this product before and knew nothing about it until talking to my instructor at a recent Exchange 2010 training class I attended. I learned a few tricks that may help someone else quickly avoid problems during a deployment of DPM 2010.

First, DPM server must be a standalone server, it cannot be installed on an Exchange server or a domain controller. In addition, you should install the AD management tools on the server as without them you will get a cryptic error message telling you that the server cannot communicate with AD and thinks you are in a workgroup or have a mis-configured DNS client.

Now that you have the DPM Server installed, its time to deploy the agent. You can deploy the agent to remove computers from a wizard driven interface, however I found that this did NOT work for me at all. I had to manually install the agent by running the agent installer directly from the C:Program FilesMicrosoft Data Protection ManagerDPMProtectionAgents. Think you are done…not quite! Now open a command prompt and navigate to the DPMbin directory. Execute this command “setdpmserver -dpmservername nameofyourserverhere” and press enter. for the servername, use the FQDN (i.e. servername.domain.local). The documentation says to use domainservername, but this DOES NOT WORK.

One you do the above, you can go into the services control panel and set the DPM server service to automatic and start it. Now you can go to your DPM server and configure protection groups and add member servers. Enjoy!

Migrating to Exchange 2010

Last week, I took on the task of migrating my personal home mail system from Exchange 2007 to Exchange 2010. As usual with Microsoft Exchange, there is no direct upgrade. A migration is from one system to the other is the main method of accomplishing this task.

This project was accelerated to the top of my todo list after taking a week long training course at Tech Sherpas on Exchange 2010. The new high availability features and the added resiliancy of Exchange 2010 sold me on the new flavor of Exchange very early on. I’m going to go through some of the things I experienced and learned during my migration. I’ll be going through another migration soon and will append this post with any added information I disciver during a second g0-round.

It all starts with Active Directory and DNS. Planning your Active Directory and DNS namespace is critical to having a healthy and resiliant Exchange mail system. I made a critical error a few years ago when setting up Active Directory in my home office. I had chosen the AD domain name “home.us” to use internally, since I was not anticipating using any products that would create a conflict when using a public domain name internally. The problem I had was with SSL certificates. I wanted to get an SSL certificate that used SANs so that I could access OWA and ActiveSync internally and externally without getting a certificate prompt. The issues started when I tried to generate a certificate using the home.us domain name, since its a public domain, anytime I tried to add a SAN using that domain, the request would fail. Since home.us is a public domain, whowever owns it would have received the requests to create a certificate on that domain. I called the SSL certificate provider I was using and confirmed with them that there is no way around this, I would have to change my internal domain name or not use it at all in my certificate. So that is where my post about renaming an AD domain comes in. Once I renamed my AD domain to something clearly internal only, I was able to generate my SSL certificate with all the SANs that I needed.

Now regarding the actual migration of Exchange 2010, it was a bit tricky. I ran into problems exporting my mailboxes from Exchange 2007. I had to install the Exchange management tools on another server and install Outlook and all the prerequisites required to make the export work, which was a huge pain. I’m seriously hoping that Microsoft one day makes mailbox exports a little easier and require less legwork to make it happen. I finally got my mailboxes exported and jotted down most of my critical Exchange settings.

Normally you would just install Exchange 2010 on a new server and migrate your mailboxes and other content over to the new server. You can then shutdown the old server and you are done. However, I had the domain rename issue to worry about which cannot be done if Exchange exists in your AD domain. By removing Exchange completely, I was not doing a migration in a strict sense of the word, but instead a new installation and ended up importing mailboxes and manually restoring settings.

I installed Exchange 2010 with no problems on my first server. I then tried to import my mailboxes and ran into problems. Turns out there is a known issue with Exchange 2010 and importing mailboxes on a single server. So I setup a second Exchange 2010 temporarily and was then able to try again, this time I found that I could not export the mailboxes because the mailbox server absolutely has to have Outlook 2010 installed on it. This is completely different from previous versions of Exchange where we are warned not to install Outlook on the Exchange server. I finally got all the requirements in place and was able to import my mailboxes. So far so good.

I had some difficulty getting my Outbound SMTP connector re-configured, but I think that issue was only due to me fat fingering the password or authentication methods. The next step was to try to take advantage of Enterprise features like DAGs for high availability. The first issue I ran into here was that Exchange 2010 requires a host operating system of Server 2008 Enterprise edition in order for this to work (since clustering is used in the background). My second server was only Server 2008 Standard edition, so I had to reload the server using Enterprise edition in order to proceed with my setup. I finally had both server running the right operating system, same version of Exchange 2010 and ready to go. Oh, one little caviat is that if you install any of the update rollups for Exchange 2010, you will run into issues when trying to uninstall Exchange 2010. You get an error saying that a previous version of the product is already installed and it recommends removing the previous version before installing this one. To get around this, just remove the update rollup and reboot. Then re-appy the update rollup and then try the uninstall again. That worekd for me and I was able to do my uninstalls with no further problems, although this took me a few hours to figure out.

End result of hours of work for most of the day on Saturday was two fully functional Exchange 2010 servers with working mailboxes, incoming and outgoing mail and partially functional SSL certificate. The Certificate issues were resolved a day or two later once I finally got my certificate straightened out with the provider, I had to re-key it a few times and wait for the e-mail confirmation.

Now to work on high availability. I created a Database Availability Group (DAG for short) and ran into some small issues with permissions. I wanted to use my AD domain controller as my “witness” for the DAG, but I would get errors when trying to create the DAG which I discovered were actually permission errors. If your witness server is not another Exchange server, you must add the “Exchange Trusted Subsystem” Security group to the local Administrators group of the server you are going to use. Since in my case this is a domain controller on a private home office system, I just added the group to the Administrators group in AD, which accomplished what I needed to get this to work. Once that was taken care of, I was able to create a DAG successfully.

Once the DAG is created, I then proceeded to add server members to the DAG, which took longer than I expected, but worked absolutely perfectly. Once that step is done, you can proceed to add copies of mailbox databases to the DAG. I added copies of my two databases to the DAG and made one server the first preferred server and made the second server the failover by assigning a preference value of “2”.

I did some basic tests of the DAG failover and found that it worked perfectly. I could switch back and forth between servers and it only took a few seconds at most. During my testing, OWA clients would momentarily get a message saying an item was unavailable if they just happen to be opening a message during the first few seconds after switching servers. Outlook clients would also only have a momentary issue if a user happened to be opening a message in online mode when the transition of servers is taking place. For all intents and purposes the switchover of mailbox servers is transparent to the users, which is awesome!

Another great feature of Exchange 2010 is that you can now move mailboxes between mailbox servers while the users are connected to their mailboxes. The new “move request” feature allows the move to take place with the mailbox in use and any changes to the mailbox after the move is complete are written to the database to bring it current on the new mailbox server. Users that are connected via Outlook will get a message saying that changes have been made that require Outlook to be restarted. Once you restart Outlook, you are connected to your new mailbox server automatically (assuming the old server is still online to allow redirection to occur).

How about backups? This is one of my favorite things about the new Exchange 2010 DAGs. Because you can now have super easy high availability in Exchange 2010, you get the added perk of making backups more efficient. How? Simple, backup your secondary DAG member databases. Assuming you have at least one server in the DAG with a real time copy of the database in question. By backing up the secondary copy, you reduce mailbox server load, by not running backups on a server users are connecting to. Because its a real copy of the database, you can do brick level restores and anything else you would normally do in your backup strategy.

To restore content in Exchange 2010, rely on your deleted items retention period for most of the common short range restore requests. For anthing greater than that, you can restore a backup of a mailbox or an entire database to an Exchange recovery database. Then use Exchange management shell cmdlets to recover specific information or the entire mailbox to the production database or mailbox.

A few other perks that aren’t so loudly advertised about Exchange 2010:}

1. OWA is now supported fully in other browsers, yes it works in firefox without having major rendering issues or having a requirement to use the “light” version.

2 OWA now supports conversation views of message threads

3. Exchange control panel lets administrators and regular users manage many aspects of their DL membership and account info right from their web brwoser. This includes their mobile device management, contact info, ability to create new DLs, etc.

4. There is better role based management of feature/security access in Exchange 2010. You can define custom roles or use the default roles provided by Microsoft. This is good for large companies who want to separate roles between admins.

5. Mailtips are cool! You can create custom mailtips to be displayed at all times, in addition to the intelligent mailtips displayed by the OOO or calendar conflicts. Mail Tips are informational messages displayed to the sender of new messages or calendar requests when using Outlook 2010 or OWA. For example, if you send a user a meeting request, if the user isn’t available due to a calendar conflict, Mail Tips will let you know before you ever send your message and will suggest alternate dates for your meeting based on the user’s availability. In addition, if a user has the OOO turned on, if you try to send a message to that user, you will get a Mail Tip telling you the user is out of the office and you will get a preview of the first fiew lines of the OOO message the user customized in his auto-reply.

6. If you will be using Exchange 2010 high availibility to handle your backups using DAGs and multiple database copies setup for a log reply lag, you can go ahead and set your loging to “circular”. However, if you will be using another product to backup Exchange, such as Data Protection Manager or Backup Exec, then you will want to keep your logging setup as normal, so that your backups will clear committed logs. Otherwise you will get warnings in Data Protection Manager specifically, that a synchronization isn’t possible because you are using circular logging and only full copies of the database can be used.

There is so much more I could mention, but those are probably some of the best parts of Exchange 2010. For more information I recommend giving the tiral version a try, or download a virtual Hard Drive and see for yourself all of the major improvements in Exchange 2010. Some of the documentation isn’t very detailed yet, I had a hard time finding step by step instructions, so it seems this is one of those times when a good lab setup for testing is a great idea to get familiar with the caviats of how things work and what is required to get them configured.

My first AD domain rename

I recently had the pleasure of getting to perform my very first Active Directory domain rename. This situation was the result of some bad planning on my part a few years ago when I first setup Active Directory in my home office, but I’ll talk about that in a separate thread that deals with Exchange 2010.

To do the rename, I was expecting to see the “Rename domain” option in ADUC, but as it turns out its all command line. So after a few commands of the rendom tool, I was able to rename my AD domain. I first had to remove Exchange completely, then I was able to perform the rename. It wasn’t that bad and went rather well. I did run into a few bumps, such as my DNS server needing a manual re-config, and the connected client machines (desktop and laptop) did not automatically update their DNS suffix.

I wouldn’t recommend doing this unless absolutely necessary, and I very nearly did just start over from scratch, but I wanted to press on and see if I could get everything to work on a renamed domain. To my delight, it worked and I’ve even got Exchange 2010 running now. The only thing I would recommend is to re-install Exchange on a server with a different node name than what it had previously. This caused me all sorts of little issues that I’ll deal with later in a separate post.

No data connection after ios4 upgrade on iPhone 3GS

I have an iPhone 3GS that was running the 3.1.3. Yesterday ios4 was released from Apple, so I decided to do the free upgrade. Everything went OK and I didn’t realize I had a problem due to my constant wifi connections at work and at home. But today when I was at lunch, I noticed that my 3G cellular data connection was not working. No mail, no Sarari web browsing, nothing. I could make and receive phone calls and txt messages, but that was it.

I had the 3G icon in the top left corner of the screen but I could not check mail or browse the web in Sarari. I tried rebooting, disabling and re-enabling the data connection, even tried resetting the network settings but none of that fixed the issue. Wifi would work without a problem, but if not on wifi, none of the above would work.

I connected my phone to my computer and used the iPhone configuration tool from Apple to open the device console log. From there I found errors and warnings related to the network config. A few Google searches later I found some threads on the apple support forums with users complaining of the same issues I was having. Someone in one of the forums suggested the issue could be caused by a glitch in the APN settings.

I then used the iPhone configuration tool from Apple to create a custom configuration profile where I manually input the correct AT&T APN settings. After applying this profile to my iPhone, I am now able to check mail, browse the web, etc. According to the support forums, this issue does not always occur, but there are a significant number of devices where this does occur. Apple and AT&T were reported to be at a loss relating to these issues when users would call for support.

At this point, the only other option for correcting this issue is to do a restore in iTunes and setup the phone as a new device, rather than restoring from backup. The downside here is the loss of SMS/MMS, call history, app data, etc.

The iPhone configuration tool is available from apple here: http://support.apple.com/kb/DL926

The correct APN settings for AT&T:

apn: wap.cingular

user: wap@cingulargprs.com

pass: CINGULAR1

(All other settings under APN must remain blank)

I hope this information will be helpful to someone.

Change mailbox alias or re-create Exchange mailboxes results in NDR from bad Outlook recipient cache

In the past when renaming or re-creating mailboxes in Exchange I’ve had issues where the Outlook cached entry for the user no longer works when internal users send mail to the specified mailbox. This is because of our dual Exchange environment running mixed versions of Exchange. The original mailbox was created under Exchange 2003 and ultimately moved to Exchange 2007, but preserving the Exchange 2003 Mailbox reference for X500. When a mailbox is re-created or the alias is changed, it can break these cached entries in Outlook. Today, I discovered a way to work around this issue from this article.

In the future if I need to change alias (due to name change) or if there are problems resulting in a need to re-create the mailbox, the steps in the article can be used to avoid having mail delivery problems for the affected user.

• The listed steps will allow the cached Outlook entries to properly resolve the user mailbox. No user action is required to make this work.

• You can find the proper alias for the user in an NDR message or by going to the cached entry in outlook and viewing its properties. It will be a string in one of the values displayed on the properties. (Note, this only works before you make the changes above).

• The updated string for the Exchange 2007 environment is automatically added to re-created mailboxes in X400 form. The old pointers can be added using X500.

• This issue only affects mail sent from internal clients using the cached entry in Outlook, all SMTP mail flow and external mail will continue to flow to the user uninterrupted regardless of this issue.